A good way to learn something is to try to explain it to somebody else. This is the essence of the Feynman method of learning [5]. I believe Feynman suggested that the “somebody” should be a six-year old.

I have in the same vein found that turning thoughts into text (and the odd LaTeX-formula) and then reading it back to see if it makes sense, is a useful tool for structuring thoughts and ideas [6]. I therefore from time to time put tentative insights into writing in this blog for myself and the world to review.

What clearly you cannot say, you do not know;

Esaias Tegnér. Epilogue at an academic graduation in Lund, 1820. Unknown translator.

with thought the word is born on lips of man;

what’s dimly said is dimly thought.

Learning is an iterative process so I take the liberty to revise earlier posts as I learn more. Hopefully they will eventually coalesce into something reasonably consistent.

Active inference framework

I have set out to try to understand the active inference framework (AIF) that is claimed to provide a unified model for human and animal perception, learning, decision making, and action [2].

The theory of active inference was first proposed by Karl Friston based on ideas that go back to the 1900:th century.

AIF has connections to several other topics that I’m interested in. There seem to be some interesting and potentially important associations between AIF and machine learning. AIF can possibly contribute to the understanding of the mental health conditions that affect many people today, especially the young. AIF is perhaps also, as Anil Seth claims in his book Being You [1], a waypoint on the road to understand the greatest of mysteries, consciousness.

The central claim by Karl Friston [2] is that AIF explains both perception and action by biological organisms. I will introduce the framework in this post with a simple example explaining the basics of perceptual inference, i.e., the process of recognizing what one observes.

Key ideas

Organisms such as humans need to, and most of the time want to, stay within certain parameters vital for staying alive. They need to for instance keep a body temperature close 37C. The active inference framework offers an elegant and parsimonious explanation for how the brain (controller) controls the state (system state) of the body (system) so that it stays within its vital manifold (the set of viable states). See also the Terminology page.

To be able to control a system the controller must have knowledge of what state the external system is in (e.g., what the body temperature is), what the preferred state is (e.g., a body temperature of 37C), and what actions that will shift the system to a preferable (viable) state if it is not there (e.g., initiate shivering to increase the body temperature if it has fallen below 37).

The controller does not have access to the system state directly. It only has access to observations of the system state through its sensors, e.g., through thermoreceptors in the body. The controller keeps an internal representation of the system state, a controller state, that it infers from the observations. Based on its internal controller state the controller can infer the proper action required to shift the system to a new system state (and the controller to a new controller state corresponding to the new system state).

The generative model is analogous to a map. If you can relate your physical location to a location on the map, you can with the help of the map infer the route to reach your destination in the real world. Analogiously, if the controller knows its current controller state (location on the map), a target controller state (the destination), it can based on experience stored in its generative model decide what action (route) to take to shift the controlled system to a new system state corresponding to the target controller state.

The generative model 1 describes the probabilistic relationship between the observations the organism makes and corresponding controller states (“map states”) thereby implicitly creating a mapping between the system states (through the observations) and the controller states. The generative model is defined as the joint probability distribution \(p(s, o)\) where \(o\) denotes an observation and \(s\) a controller state.

The generative model can according to basic probability theory be expressed as a product of two probability distributions, priors and likelihoods like this:

$$p(s, o) = p(o \mid s)p(s)$$

The prior probability over controller states, \(p(s)\), defines the controller states that the agent expects or prefers to be in by assigning such states high probabilities (e.g., body temperature of 37C). Some preferred states are hard-coded like the body temperature and some are decided by the organism itself depending on the context like where to go next to find food. The prior distribution over controller states thus functions as a variable set of set-points for the organism’s control algorithm. The organism continually acts to reach and stay close to those set-points. This will be the topic of future posts.

Likelihoods, \(p(o \mid s)\), represent the probabilities of observations given a controller state \(s\).

Controller states don’t capture all aspects of system states. We see the world in a way that facilitates survival and procreation, not “Das Ding an sich”. We have a user interface to the world in Donald Hoffman’s words [7]. An exampel of such user interface feature is our subjective experience of colors. Colors don’t exist in the real world but they are useful to us. Matter in our intuitive sense doesn’t exist either (for that matter). At the bottom there are only quantum fields, the Pauli exclusion principle that keeps us from falling through the floor, and mostly empty space.

Some organisms update their generative models over time by learning from experience. The generative models of simple organisms are rather hard-coded.

Active inference presents a shift from the traditional view that an agent passively receives inputs through the senses, processes these inputs, and then decides on an action. Instead, according to AIF, the agent continuously generates predictions about the controller states it expects or prefers to experience. When there’s a mismatch between the controller state predicted by the generative model and the actual controller state, a prediction error arises. The agent tries continuously to minimize the prediction error, either by updating its controller state to better match the controlled system state or by taking action to make the world more in line with its preferred controller state.

Whether to observe or to act depends on the context. During food foraging, there is a strong emphasis on action to reach the preferred controller state (to be satiated). In contrast, while watching a movie, the emphasis might lean more towards passive observation continuously adjusting one’s controller states according to what plays out on the screen (having hopefully before that reached the preferred controller state that one is enjoying a good movie).

A simple example

Let’s look at how a human actor, according to AIF, could optimally infer knowledge about the state of the real world (system state) and map it on a controller state based on its observation in an extremely simple scenario.

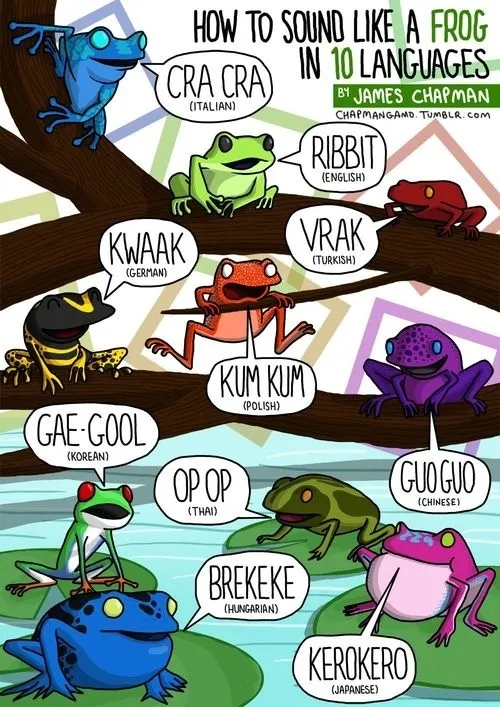

We assume that the actor has seen frogs and lizards before so they have a fairly accurate one-to-one mapping from the real-world amphibians and reptiles to corresponding controller states. We further assume that there are only two kinds of animals in a garden, namely frogs and lizards. Frogs are prone to jumping. The lizards also jump but more seldom. We also assume that the actor has forgotten their eyeglasses in the house so that they can’t really tell a frog from a lizard just by looking at it but they can discern whether it jumps or crawls (yes, this is a contrived scenario getting more contrived but please bear with me).

The actor knows, based on a census, the ratio of lizards to frogs in the garden. This knowledge, when quantified, can be expressed as a prior. The agent also knows based on some experience that frogs jump much more often than lizards do. This knowledge, also when quantified, is called likelihood. The prior and the likelihood constitute the actor’s generative model.

The agent now observes a random animal for two minutes. They don’t see what animal it is because of their poor eyesight but they can see that the animal jumps. What controller state does the agent arrive at, frog or lizard?

Nothing is certain

We cannot be certain about much in this world (except perhaps death and taxes). In the context of active inference there is uncertainty about the accuracy of the observation (aleatoric uncertainty) and about the accuracy of the brain’s generative model (epistemic uncertainty).

When I suggested to Bard, the AI, that when I see a cat on the street I’m pretty sure it’s a cat and not a gorilla it replied “it is possible that the cat we see on the street is actually a very large cat, or that it is a gorilla that has been dressed up as a cat”. So take it from Bard, nothing is certain.

When we are uncertain, we need to describe the world in terms of probabilities and probability distributions. We will say things like “the animal is a frog with 73% probability” (and therefore, in a garden with only two species, a lizard with 27 % probability). Bayes’ theorem and AIF are all about probabilities, not certainties.

Notation

When I don’t understand something mathematical or technical, it is frequently due to confusing or unfamiliar notation or unclear ontology. (The other times it is down to cognitive limitations I guess.) Before I continue, I will therefore introduce some notation that I hope I can stick to in this and future posts. I try to use as commonly accepted notation as possible but unfortunately there is no standard notation in mathematics.

Active inference is about observations, controller states, and probability distributions (probability mass functions and probability density functions). Observations and states can be discrete or continuous. Observations that can be enumerated or named, like \(\text{jumps}\), are discrete. Observations that can be measured, like the body temperature, are continuous.

Discrete observations and beliefs are events in the general vocabulary of probability theory. In this post we only consider discrete observations and controller states.

Potential observations are represented by a vector of events:

$$\pmb{\mathcal{O}} = [\mathcal{O}_1, \mathcal{O}_2, \ldots, \mathcal{O}_n] = [\texttt{jumps}, \texttt{crawls}, \ldots]$$

\(\pmb{\mathcal{O}}\) is a vector of potential observations. It doesn’t by itself say anything about what observations have been made or are likely to be made. We therefore also need to a assign a probability \(P\) to each observations, in this case \(P(\texttt{jumps})\) and \(P(\texttt{crawls})\) respectively. We collect these probabilities into a vector

$$\pmb{\omega} = [\omega_1, \omega_2, \ldots, \omega_n] = [P(\texttt{jumps}), P(\texttt{crawls}), \ldots]$$

The vector of observations \(\pmb{\mathcal{O}}\) and the vector of probabilities \(\pmb{\omega}\) are assumed to be matched element-wise so that \(P(\mathcal{O}_i) = \omega_i\)

For formal mathematical treatment, all events need to be mapped to random variables, real numbers representing the different events. This is a technicality but will make things more mathematically consistent.

In our case \(\texttt{jumps}\) could for instance be mapped to \(0\) and \(\texttt{crawls}\) to \(1\) (the mapping is arbitrary for discrete events without an order). In general we do the mapping \(\pmb{\mathcal{X}} \mapsto \pmb{X}\), where \(\pmb{\mathcal{X}}\) is an event and \(\pmb{X} \in \mathbb{R}\).

The observation events are analogously mapped to observation random variables: \(\pmb{\mathcal{O}} \mapsto \pmb{O}\). When referring to a certain value of a random variable such as an observation, we use lower case letters, e.g., \(P(O = o)\).

Continuous observations such as body temperature and controller states can be represented by random variables directly; there is no need for the event concept.

A probability mass function [3], \(p(x)\) is a function that returns the probability of a discrete random variable value \(x\) such that \(p(x) = P(X = x)\).

Note that a probability is denoted with a capital \(P\) while a probability mass function (probability distribution) is denoted with a lower case \(p\).

Since our observations are discrete, the probability mass function for the observations that we are interested in would be:

$$p(o) = \text{Cat}(o, \pmb{\omega)}$$

This is called a categorical probability mass function and is a basically a “lookup table” of probabilities such that \(p(o_i) = P(O = o_i) = \omega_i\). The interesting aspect of a categorical probability mass function is the vector of probabilities. In practical situations it is often useful to reason about the probabilities directly and to “forget” that we pull the probabilities out from a probability mass function. Read on to see what I mean.

Discrete controller states can also be seen as events:

$$\pmb{\mathcal{S}} = [\mathcal{S}_1, \mathcal{S}_2, \ldots, \mathcal{S}_n] = [\texttt{frog}, \texttt{lizard}, \ldots]$$

The probability of each controller state is represented by the vector:

$$\pmb{\sigma} = [\sigma_1, \sigma_2, \ldots, \sigma_n] = [P(\texttt{frog}), P(\texttt{lizard}), \ldots]$$.

The controller state events are mapped to random variables: \(\pmb{\mathcal{S}} \mapsto \pmb{S}\)

The probability distribution of the controller state random variable is:

$$p(s) = \text{Cat}(s, \pmb{\sigma)}$$

Back to frogs and lizards

Assume that the prior probabilities held by the actor for finding frogs and lizards in the garden are:

$$\pmb{\sigma} = [0.25, 0.75]$$

This means that before an observation is made, the actor assigns a \(25\%\) probability to the controller state \(\texttt{frog}\) and a \(75\%\) probability to the controller state \(\texttt{lizard}\).

Assume that the likelihood for each of the species in the garden jumping within two minutes is represented by the vector:

$$[P(\texttt{jumps} \mid \texttt{frog}), P(\texttt{jumps} \mid \texttt{lizard})] = [0.8, 0.1]$$

\(P(\texttt{jumps} \mid \texttt{frog})\) should be read as “the probability for observing jumping given that the animal is a frog”.

This means that frogs are eight times more likely to jump than lizards. In other words: if there would be as many frogs as lizards in the garden, then an animal jumping in the garden would be a frog eight times out of nine; for every eight jumping frogs there would be one jumping lizard.

With three times as many lizards as frogs, the number of lizards jumping would go up a factor three meaning that a jumping animal would be a frog only eight times out of eleven; for every eight jumping frogs there would now be three jumping lizards.

The actor saw the animal jump. The probabilities for the animal being a frog and a lizard are therefore given by the vector:

$$[P(\texttt{frog} \mid \texttt{jumps}), P(\texttt{lizard} \mid \texttt{jumps})] = [\frac{8}{11}, \frac{3}{11}] \approx [0.73, 0.27]$$

meaning that the actor assigns a \(73\%\) probability to the controller state \(\texttt{frog}\) and \(27\%\) probability to the controller state \(\texttt{lizard}\). This is a major update from the prior of \(25\%\) probability of a \(\texttt{frog}\) and a \(75\%\) probability of a \(\texttt{lizard}\). The observation shifts the probability massively – but not entirely.

With some more math

Below follows an alternative account of the same example, this time with a little more mathematics, introducing Bayes’ theorem.

As stated above, \(p(s)\) and \(p(o \mid s)\), the prior and the likelihood together define the brain’s current model of the (very limited) world.

The probabilities assigned to the two controller states \(\texttt{frog}\) and \(\texttt{lizard}\) given the observation \(o\) are given by:

$$p(s \mid o)= \frac{p(o \mid s)p(s)}{p(o)}$$

Let’s put some numbers in the nominator (\(\odot\) indicates element-wise multiplication):

$$[P(\texttt{jumps} \mid \texttt{frog})P(\texttt{frog}), P(\texttt{jumps} \mid \texttt{lizard})P(\texttt{lizard})] = [0.8, 0.1] \odot [0.25, 0.75] = [0.2, 0.075]$$

The denominator quantifies the probability of a jumping observation. It can in this simple case, assuming that there are only two types of animals in the garden and that their jumping propensities are known, be calculated using the law of total probability:

$$P(\texttt{jumps}) = P(\texttt{jumps} \mid \texttt{frog})P(\texttt{frog}) + P(\texttt{jumps} \mid \texttt{lizard})P(\texttt{lizard}) = $$

$$0.8*0.25 + 0.1 * 0.75 = 0.275$$

The actor’s thus assigns the probabilities for the controller states \(\texttt{frog}\) and \(\texttt{lizard}\) respectively as follows:

$$[P(\texttt{frog} \mid \texttt{jumps}), P(\texttt{lizard} \mid \texttt{jumps})]= [0.2, 0.075] / 0.275 \approx [0.73, 0.27]$$

This is the same result as above. Note how the fact that there are three times as many lizards than frogs as expressed in the prior increases the probability for the jumping animal being a lizard even if lizards don’t readily jump. Bayes’ theorem sometimes gives surprising but always correct answers.

We will take a deeper look into the mathematic needed in real-world situations, outside of the garden, in future posts.

Links

[1] Anil Seth. Being You.

[2] Thomas Parr, Giovanni Pezzulo, Karl J. Friston. Active Inference.

[3] MIT Open Courseware. Introduction to Probability and Statistics.

[4] Ryan Smith, Karl J. Friston, Christopher J. Whyte. A step-by-step tutorial on active inference and its application to empirical data. Journal of Mathematical Psychology. Volume 107. 2022.

[5] Farnham Street Blog. The Feynman Technique: Master the Art of Learning.

[6] Youki Terada. Why Students Should Write in All Subjects. Edutopia.

[7] Donald Hoffman. The Case Against Reality.

- A generative model approximates the full joint probability distribution between input and output, in this case between observations and states, \(p(s, o)\). This in contrast to a discriminative model that is “one way”. The likelihood \(p(o \mid s)\) is a discriminative model. ↩︎